Attacking Big Business

Who Needs Rep?

Larger organisations often employ reputational filtering of web traffic to defend against delivery of malicious code and the exfiltration of data if a compromise were ever to occur. It's an effective control provided by many next-generation firewalls and web proxies, including newer cloud-based solutions such as Zscaler.

Reputational filtering typically blocks websites known to be malicious, performs antivirus scanning of all traffic, and crucially for us in respect to performing a simulated attack, warns end-users when visiting "non-categorised" sites. Any URLs and domains used as part of an attack now require user interaction in a web browser. This effectively rules out using newly stood up infrastructure both at the delivery and exfiltration stages of an attack, as these activities are performed without the victim's knowledge. The only options left to the attacker would be to "build" reputation over time, or alternatively, cheat the system.

Unfortunately, many reputational filtering solutions are fundamentally flawed - classification of URLs and domains can sometimes be as simple as a form submission with no human verification and no real inspection of the content hosted on a site. An attacker could feasibly submit their infrastructure to all major providers and wait until the sites are reviewed and assigned an appropriate permitted category. We've used this method for several years to good effect.

The problem becomes more difficult to overcome when the reputation filter does not permit public submission of sites. For example, the Cisco Web Security Appliance (WSA) is one solution that only allows Cisco customers to submit classification requests; several other providers have adopted a similar approach. Even if this could be overcome, the task becomes insurmountable to most when the exact reputation filter in use is unknown in advance.

Considerable effort can be spent in gaining the required reputation to deliver a successful attack. Luckily for network defenders, vendors often react quickly to reports of misclassification and misuse; if an attack is reported or detected via some other control, the hard-earned reputation can be quickly rescinded by the vendor, thwarting future attacks and requiring the attacker to return to the drawing board. There is however, a much easier option for the attacker.

Delivery And Exfiltration Using Trusted Sites

Some sites are implicitly "trusted" by all simply due to their widespread use and huge popularity. Examples include Google, Amazon, Microsoft, Twitter and Facebook - there are of course many other hugely popular sites used across the globe. If an attacker uses any of these trusted sites in their attack path, reputational filtering will be ineffective. This is a widely known limitation of reputational filtering, and extremely difficult to detect when only a small number of individuals are targeted in a campaign.

The idea is simple - any "trusted" site that allows arbitrary user content can be used to deliver exploit code and payloads. As an example, this includes Facebook posts, shared Google Drive files and hosted files in Amazon S3. In most cases, a fully functional command and control (C2) channel can be established if running malware can itself "post" content to the chosen trusted service.

Staying Under The Radar

Real attackers utilise complex obfuscation routines and encryption to secure C2 channels; Cyberis adopts a similar approach when conducting a simulated attack. Let's revisit our previous example with a simple symmetric encryption key (the word ‘secret’):

One advantage in a controlled simulated attack when compared to a real-world attack is the encryption key could be a secret that only the victim and we know. For example, a specially crafted internal DNS TXT record could contain the secret key, or alternatively an environment variable set on the victim’s machine. If our malware were ever to find its way to a target outside of the agreed scope, we can be confident that it simply will not run. It also helps protect our malware from researchers and antivirus vendors.

Of course, the encrypted communications are likely to look suspicious if posted to a public Facebook wall, but a file in a S3 bucket, or a secret Gist on GitHub, is much more likely to go unnoticed.

Tripwire Defences

As a young kid reading Famous Five and Secret Seven books, I found simple pleasure in setting up "tripwires" to detect if my younger sibling had accessed my room. A piece of paper between the door latch and frame, a strand of cotton tied to the door handle, and a sliver of Sellotape between the architrave and door were my go-to homemade tripwires. If any had been disturbed, it was a sure-fire sign of an intruder.

Today I find myself setting up similar trip wires to detect if my carefully orchestrated attacks have been picked up by the blue team. This is easy when hosting on infrastructure under our full control, but when utilising cloud services and social network sites, it is not trivial to detect if someone is on to us. We do have some tricks available to us however.

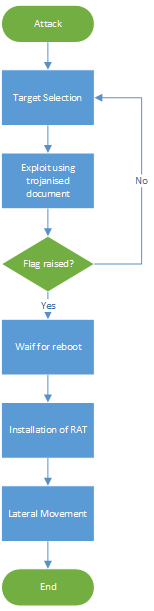

Bootstrapping

We deliver all our attacks in several stages for two main reasons. Firstly, we do not want to reveal our most valued Remote Access Trojan (RAT) to the world. We only want to deliver the implant to "successfully compromised" individuals who we are sure have not reported the incident to security teams. Secondly, bootstrapping gives us the opportunity to spot if the blue team has found us - the tripwire.

Bootstrapping is the term we use to describe installation of a very basic downloader that executes upon the victim's next login. We also raise a flag to show that the bootstrap has successfully completed - at this point we know we simply must wait a day or two until we get the first real "beacon". This gives us time to plan the next phases of the attack, clean down the initial exploit (for example, removing hosted trojanised files and scripts), and wait to see if the tripwire is triggered.

The initial flag of success can be subtle. A Facebook or Twitter 'Like' from a certain user, a starred Gist or a certain message posted to a public wall or bulletin board. When we see this flag being raised, we can now initiate the clean-up. At this stage we're monitoring to ensure we've achieved a foothold, without being caught.

The tripwire is obvious; if we see the initial exploit being requested for a second time, or if we see phase 3 being initiated (indicating the next login) within a very short period, we would suspect our attack has been detected – our attack is probably being executed in a sandbox. If this is the case, we can "rinse and repeat" with new email accounts, different trusted sites and alternative phishing scenarios.

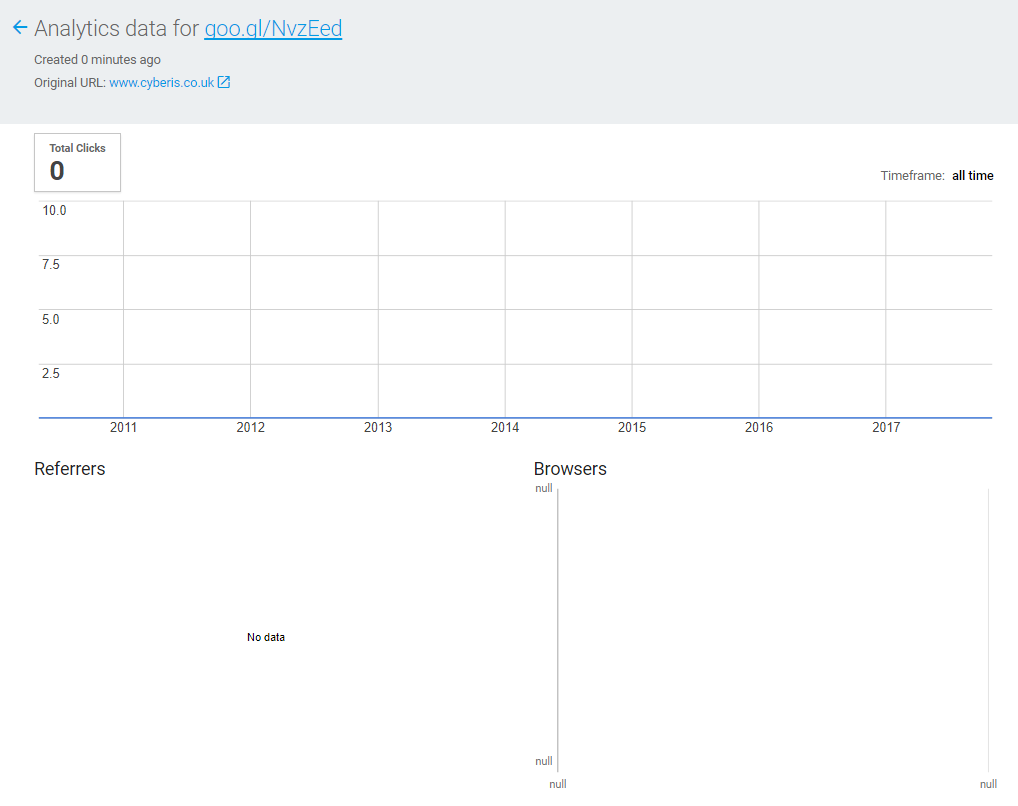

URL Shortening

Several URL shortening services provide analytics regarding who has followed the link. We've made some interesting observations when using certain services in our attacks, namely the analytics do not capture requests from our malware, only real browsers. This is interesting as if we see any increasing in click counts, we can suspect someone has found our attack. Furthermore, we can see where in the world they're coming from, and the browser they're using. We now have another tripwire set up.

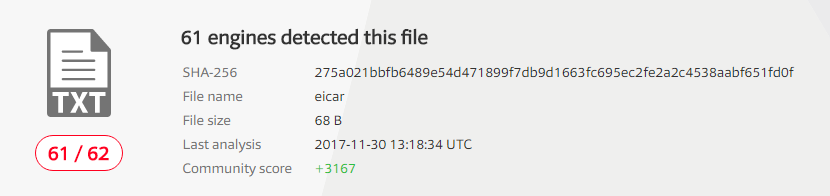

Monitoring Of Community Sites

Virus Total is a hugely valuable resource for blue teams, researchers and network defenders. It's also a useful tripwire for the attacker, or us, in respect to a simulated attack. Good OPSEC of course prevents us uploading our “malware” directly to such sites, however regular checks or alerts for any uploads matching the checksum value of our files is equally as valuable - if we ever see a hit, we know we've been found.

One Time Keys

The symmetric encryption outlined in Figure 3 above can be improved to encompass a one-time online key in addition to the "internal" secret mentioned above. Once the online key has been retrieved by our payload, it can simply be removed, preventing any analysts from subsequently decrypting the communications, even with knowledge of the internal secret.

secretencryptionkey = sha256(onlinekey + environmentvariable)It's yet another tripwire - if we see the online key being requested for a second time, the game is up.

An End-To-End Example

We've disclosed several generic techniques that both we and real attackers use during this blog post. Let's bring it all together in a real world end-to-end example.

We’ve recently conducted a simulated attack for a client. We performed a comprehensive open source reconnaissance project and identified several hundred users, and in many cases their position within the company.

Given the timing, the DDE weakness recently disclosed in the Office suite seemed to be a good exploit choice. We chose the Outlook “flavour” of this exploit, whereby code-execution would occur if the target replies to an email message. The phishing campaign related to a hotel booking for the intended target, all from generic webmail accounts not attributable to Cyberis.

The first 10 emails sent did not result in a response. Most importantly, our ‘flag’ was not triggered, nor were our tripwires. In this attack we used secret Gists on Github, and ‘Stars’ to indicate whether or not an exploit had succeeded. As we were sure the attack had not yet been reported, we could continue to deliver the same attack to more of our identified targets.

Finally, a victim replied. The cover story worked, and a reply was received stating they knew nothing of the booking. The reply executed a very short PowerShell code snippet which downloaded and executed a Google URL shortened Gist from Github, and subsequently starred a certain Gist known to us using the GitHub API. A login registry key was created that spawned another hidden PowerShell script and executed code from another Google URL shortened Gist upon next login. As an additional layer of protection, the PowerShell script was encrypted using a specially chosen environmental variable known to be set within our target environment.

We could check the timing of the attack, and the analytics relating to the shortened links. All timings and flags look consistent with what we’d expect from a real victim, and none of our tripwires had been triggered. Time to move to our full RAT installation.

We waited for the next reboot and replaced the contents of the online Gist with our fully functional RAT. This also used GitHub as a C2 channel, parsing Gist revisions for tasked commands and updating Gist revisions with results. At this point, we were effectively sat at the victim’s machine, with access to all the network resources and data assets that would be available to our victim.

Why Do We Do This?

We’re using advanced techniques here to compromise our intended victims and remain under the radar. The primary objective is not to prove how great Cyberis is at delivering real attacks, rather it is to assess the detective controls within our target’s organisation, and most importantly, the enacted response function when an attack is detected or reported.

Of course, many recommendations come out of such an engagement, typically involving application whitelisting, hardening both the perimeter and end-user workstations and programmes of user education – in summary a defence-in-depth approach. Ultimately however, such engagements give our clients the opportunity to find the gaps in their defence - the chinks in their armour - and to learn first-hand how they fair against advanced threat actors.

If you can take just one thing from this post, you should no longer assume attacks only originate from “bad” sources. Trusted sites can be just as dangerous.

Improve your security

Our experienced team will identify and address your most critical information security concerns.